When you A/B test visual design elements, you identify which colors, images, layouts, and call-to-action buttons resonate best with your audience. Changing small details systematically helps you optimize user engagement, improve conversions, and create appealing environments aligned with user preferences. Focus on isolating one element at a time, analyze results carefully, and use data to guide your decisions. If you continue exploring, you’ll discover proven strategies to refine your visual testing approach effectively.

Key Takeaways

- Isolate specific visual elements (e.g., buttons, images, headlines) to test their impact on user engagement.

- Use controlled variants to compare different colors, sizes, layouts, and placements systematically.

- Ensure statistical significance by selecting appropriate sample sizes and randomizing visitor groups.

- Measure key metrics like click-through rate, conversions, and bounce rate to evaluate visual effectiveness.

- Continuously analyze results, iterate designs, and document insights to optimize visual appeal and performance.

Top picks for "test visual design"

Open Amazon search results for this keyword.

As an affiliate, we earn on qualifying purchases.

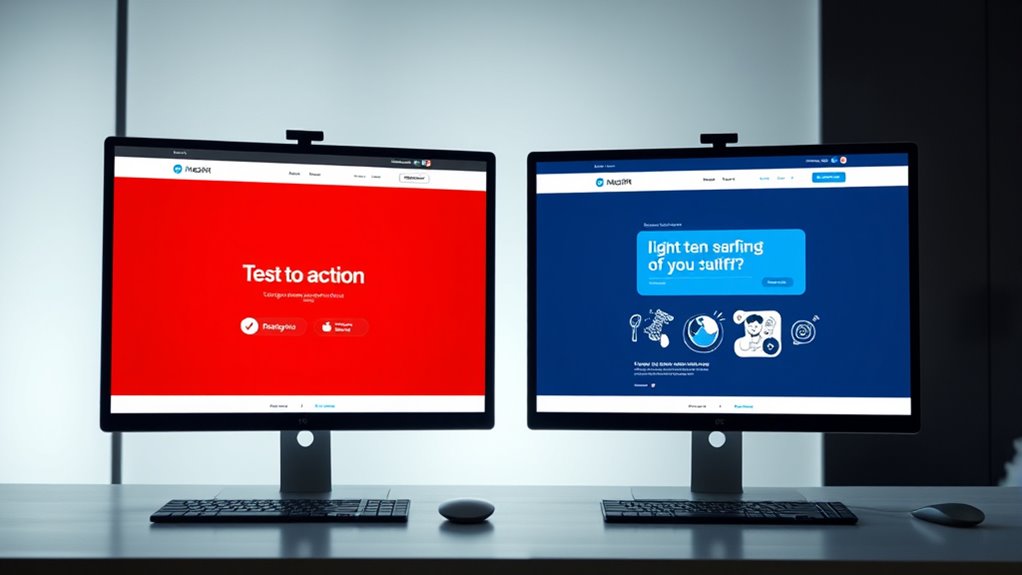

Understanding the Importance of Visual Variations

Understanding the importance of visual variations is essential because even small changes can greatly impact user engagement and conversion rates. When you tweak colors, layouts, or images, you influence how visitors perceive your site and whether they take action. Subtle differences can make your call-to-action buttons more noticeable or create a more inviting atmosphere. Recognizing how these variations affect user behavior helps you optimize your design without overhauling everything at once. By systematically testing different visual elements, you gain insights into what resonates with your audience. This process allows you to make informed decisions that boost conversions and improve overall user experience. Remember, precision in visual testing ensures you focus on changes that truly matter. Additionally, understanding visual design elements can help you better align your site with principles of mental and emotional well-being, fostering a more positive environment for visitors. Incorporating curiosity-driven design can also encourage visitors to explore and engage more deeply with your content, leading to increased satisfaction and trust. Recognizing the impact of color accuracy and contrast ratios in your visual choices further enhances the effectiveness of your testing strategies. Moreover, considering the credibility of information presented visually can influence trust and decision-making among your audience. Paying attention to how essential oils are represented visually can also enhance user understanding of their benefits and uses, making your content more effective.

Key Elements to Test in Visual Design

When conducting A/B tests on visual design, focusing on key elements can lead to significant improvements in user engagement and conversions. Start by testing your headline fonts, sizes, and colors, as these directly impact readability and attention. Next, experiment with images and graphics—try different styles, placements, or even removing them to see what resonates. Button design also matters; test variations in color, size, and wording to optimize click-through rates. Layout and spacing influence how users navigate your page; experiment with different arrangements to improve flow. Additionally, visual hierarchy plays a crucial role in guiding users’ attention and making your content more intuitive to navigate. Considering color schemes is also essential, as they evoke emotions and influence perceptions, which can affect overall user experience. Testing how highlighted elements draw focus can further enhance user interaction and guide them toward desired actions. Incorporating user feedback on vacuum features can provide insights into what visual cues attract more clicks and interest.

Designing Effective A/B Test Experiments

Designing effective A/B test experiments requires careful planning to guarantee meaningful results. Start by defining your clear objectives—know exactly what you want to learn. Identify the specific visual elements you’ll test, such as colors, layouts, or images, and create variants that isolate each change. Ensure your sample size is large enough to produce statistically significant outcomes; use sample calculators if needed. Randomly assign visitors to control and test groups to eliminate bias. Keep other variables constant so you’re only measuring the impact of your visual change. Decide on the duration of the test, balancing enough time to gather data with avoiding external influences. Proper planning maximizes your chances of obtaining actionable insights. Additionally, considering user experience factors can help you interpret results more effectively and enhance overall site performance. Understanding the divorce process in various states can also inform how you tailor your experiments to different audiences, ensuring your data remains relevant across diverse segments. Incorporating environmental considerations, such as sustainable practices, can further improve the ethical impact of your testing methodology and promote social responsibility in your design choices.

Analyzing Results and Making Data-Driven Decisions

Once you’ve collected enough data from your A/B test, analyzing the results accurately is essential for making informed decisions. Focus on key metrics such as click-through rates, conversions, and bounce rates. Use statistical significance tests to determine if differences are meaningful or due to chance. Visualize data with charts to spot trends quickly. Consider segmenting results by user demographics to uncover insights. Incorporating space optimization principles can further enhance your understanding of how design changes impact user behavior and overall efficiency. Additionally, understanding how different cookie types influence data collection can help refine your analysis process. Recognizing the well-being effects of user interface design choices can also contribute to a more engaging user experience. Employing passive voice detection tools can improve clarity by highlighting less direct sentence structures that may obscure your message.

Best Practices for Continuous Optimization

To achieve ongoing success, you need to prioritize continuous optimization by regularly reviewing your test results and making iterative improvements. Schedule consistent check-ins to analyze new data, and look for patterns that reveal what works best. Avoid complacency—what improves metrics today may change tomorrow as user behavior evolves. Use your insights to refine your visuals, headlines, and calls-to-action incrementally. Always test one variable at a time to isolate effects accurately. Document your changes and outcomes to build a knowledge base for future experiments. Stay flexible and open to new ideas, and don’t hesitate to revisit previous winners for further enhancement. Emphasizing ongoing refinement ensures your visual elements stay aligned with user preferences, maximizing engagement and conversion over time.

Frequently Asked Questions

How Do I Prioritize Which Visual Elements to Test First?

When deciding which visual elements to test first, focus on those that impact user engagement and conversions the most. Look at areas like buttons, headlines, images, and layout. Use analytics to identify where users drop off or spend the most time. Prioritize testing elements that can deliver quick wins or profoundly improve your goals. Start with changes that are easy to implement and measure for the best results.

What Tools Are Best for Conducting A/B Visual Testing?

Imagine choosing the best tool for a complex task—your options vary from user-friendly platforms to advanced analytics. For A/B visual testing, tools like Optimizely, VWO, and Google Optimize stand out. You’ll want an intuitive interface, detailed reporting, and easy integration with your website. Start with free or trial versions to see which aligns best with your needs, then scale up as your testing skills and goals grow.

How Many Variations Should I Include in Each Test?

You should include enough variations to gather meaningful data without overburdening your test. Generally, start with 2-4 variations to compare against your control. This allows you to identify clear differences while keeping the test manageable. If you have more ideas, consider running multiple rounds or segmenting your tests. Focus on quality, not quantity, to ensure reliable results and avoid confusing your audience with too many options.

Can A/B Testing Visuals Impact SEO Rankings?

You might wonder if A/B testing visuals can impact your SEO rankings. While testing visuals primarily aims to improve user engagement and conversions, it can indirectly influence SEO. Better visuals can reduce bounce rates, increase time on site, and encourage sharing, all of which search engines consider. However, guarantee your tests don’t slow page load times or create duplicate content, as these factors can negatively affect your rankings.

How Do I Handle Conflicting Results From Multiple Tests?

When you encounter conflicting test results, like a case where one version boosts engagement but another improves conversions, focus on your primary goal. For example, if increasing sales matters most, prioritize the test that maximizes conversions. Analyze the data carefully, consider external factors, and run additional tests if needed. Trust your business objectives, and don’t hesitate to combine insights for a balanced approach that best serves your overall strategy.

Conclusion

Think of your visual design as a garden—you plant different flowers, observe which thrive, and prune what doesn’t. By testing variations, you nurture a vibrant, thriving space that attracts more visitors. Keep experimenting like a skilled gardener, tending to each element with care. With each data-driven decision, you cultivate a landscape that captivates your audience and blossoms into success. Embrace this ongoing journey of growth, and watch your design flourish.